subscribe via RSS

categories: api, api-design, community, condure, faas, fanout, grip, mongrel2, protocols, pushpin, python, realtime, rust, scalability, security, usability, walkthroughs, webhooks, websockets, xmpp, zeromq, zurl

-

Building a realtime API with RethinkDB

RethinkDB is a modern NoSQL database that makes it easy to build realtime web services. One of its standout features is called Changefeeds. Applications can query tables for ongoing changes, and RethinkDB will push any changes to applications as they happen. The Changefeeds feature is interesting for many reasons:

- You don’t need a separate message queue to wake up workers that operate on new data.

- Database writes made from anywhere will propagate out as changes. Use the RethinkDB dashboard to muck with data? Run a migration script? Listeners will hear about it.

- Filtering/squashing of change events within RethinkDB. In many cases it may be easier to filter events using ReQL than using a message queue and filtering workers.

This makes RethinkDB a compelling part of a realtime web service stack. In this article, we’ll describe how to use RethinkDB to implement a leaderboard API with realtime updates. Emphasis on API. Unlike other leaderboard examples you may have seen elsewhere, the focus here will be to create a clean API definition and use RethinkDB as part of the implementation. If you’re not sure what it means for an API to have realtime capabilities, check out this guide.

We’ll use the following components to build the leaderboard API:

- Database: RethinkDB, hosted on a Rackspace server.

- Web service: Django, hosted by Heroku.

- Realtime push to clients: Pushpin, hosted by Fanout Cloud.

Since the server app targets Heroku, we’ll be using environment variables for configuration and foreman for local testing.

Read on to see how it’s done. You can also look at the source.

-

Realtime API design guide

New to the subject of realtime APIs? This article is the place to start! We’ll discuss the most common design approaches and their pros/cons, as well as link to the documentation of 16 public realtime APIs that you can use for inspiration.

-

Stateless WebSockets with Express and Pushpin

One of the most interesting features of the Pushpin proxy is its ability to gateway between WebSocket clients and plain HTTP backend servers. In this article, we’ll demonstrate how to build a WebSocket service using Express as the HTTP backend behind Pushpin.

-

Bayeux / Faye compatibility

Bayeux is one of the few standard protocols for publish-subscribe messaging on the web. Today we announce Bayeux compatibility in the Fanout Cloud realtime push service. When you publish JSON objects through our service, they can be received by any Bayeux-compatible client library, such as Faye.

-

Building a realtime API in Django

Django is an awesome framework for building web services using the Python language. However, it is not well-suited for handling long-lived connections, which are needed for realtime data push. In this article we’ll explain how to pair Django with Fanout Cloud to reach realtime nirvana.

-

Do you have a contingency plan?

Do you have a microservice contingency plan?

How many APIs does your company consume? How many microservices do you depend on? This brave new world of public APIs and microservices is great, but what happens when it’s not?

-

Calling Webhooks asynchronously with Zurl

Zurl is an HTTP client daemon based on libcurl that makes outbound HTTP requests asynchronously. It’s super useful for invoking Webhooks. Zurl supports fire-and-forget invocation, error monitoring, and protection from evil callback URLs. Sounds pretty great, right? Let’s see how it’s done.

-

Mongrel2 HTTP server now in Debian/Ubuntu

Mongrel2 is a fast and simple HTTP & WebSocket server that communicates to backend workers via ZeroMQ. It does one thing and does it very well, making it an ideal part of a componentized architecture. The code is event-driven, allowing it to support thousands of concurrent connections and also asynchronous behaviors. These properties are especially important to realtime applications.

Fanout has been one of the most active contributors to the Mongrel2 project over the past year, adding features such as TLS SNI and improved streaming capability. We’ve also been working on making the server easier for people to get started with. And with that, we are proud to announce official packages for Debian and Ubuntu!

-

You might not need a WebSocket

Before I begin, I want to say that WebSockets are great. I’ve even implemented RFC 6455 myself in Zurl and Pushpin, which are used by Fanout Cloud.

However, after spending quite some time working on large distributed applications and gaining a greater appreciation of REST and messaging patterns, I feel that much of what typical web applications want to accomplish with WebSockets (or with socket-like abstractions) is perhaps better solved by other means.

-

Fun with Zurl, the HTTP/WebSocket client daemon

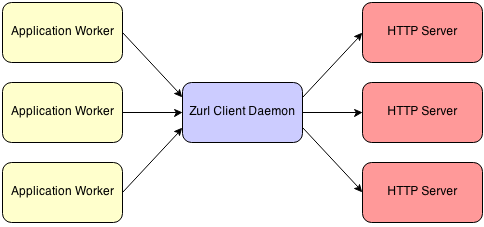

Zurl is a gateway that converts between ZeroMQ messages and outbound HTTP requests or WebSocket connections. It gives you powerful access to these protocols from within a message-oriented architecture. The following diagram shows how Zurl may fit in among the entities involved:

Any number of workers can contact any number of HTTP or WebSocket servers, and Zurl will perform conversions to/from ZeroMQ messages as necessary. It uses libcurl under the hood.

The format of the messages exchanged between workers and Zurl is described by the ZHTTP protocol. ZHTTP is an abstraction of HTTP using JSON-formatted or TNetString-formatted messages. The protocol makes it easy to work with HTTP at a high level, without needing to worry about details such as persistent connections or chunking. Zurl takes care of those things for you when gatewaying to the servers.

• filed under

• filed under  • filed under

• filed under