subscribe via RSS

-

We've been acquired by Fastly

I’m excited to share that Fanout is now part of Fastly! Over the coming months we’ll be rolling out our realtime/push capabilities onto their global network.

See also Fastly’s announcement.

-

A cloud-native platform for push APIs

Today we are excited to announce Fanout Platform!

Fanout Platform is infrastructure software for building HTTP streaming and WebSocket APIs. It is comprised of our open source project, Pushpin, along with special add-ons for scaling. Helm charts are available for installation on Kubernetes.

Here’s a short video that shows how easy it is to upgrade from Pushpin to Fanout Platform:

Read on to learn about why we are doing this, and how it works.

-

Rewriting Pushpin's connection manager in Rust

For over 7 years, Pushpin used Mongrel2 for managing HTTP and WebSocket client connections. Mongrel2 served us well during that time, requiring little maintenance. However, we are now in the process of replacing it with a new project, Condure. In this article we’ll discuss how and why Condure was developed.

-

Reliable Server-Sent Events for Django

Today we’re pleased to introduce Django EventStream, a module that provides Server-Sent Events capability to your Django server application. It relies on Pushpin or Fanout Cloud to manage the client connections. Events can optionally be persisted to your database, for highly reliable delivery.

-

Pushpin reliable streaming

Earlier this month we discussed the challenges of pushing data reliably. Fanout products such as Pushpin (and Fanout Cloud, which runs Pushpin) do not entirely insulate developers from these challenges, as it is not possible to do so within our scope. However, we recently devised a way to reduce the pain involved.

Realtime APIs usually require receivers to juggle two data sources if they want to receive data reliably. For example, a client might listen for updates using a best-effort streaming API, and recover data using a REST API. So we thought, what if Pushpin could manage these two data sources, such that the client only needs to worry about one?

-

Moving from polling to long-polling

If you’ve built a REST API that clients poll for updates, you’ve probably considered adding a realtime push mechanism. Maybe you’ve been putting it off due to the added complexity, or the impact it might have on your API contract. These are valid concerns, but push doesn’t have to be that complicated.

In this article we’ll discuss how to update an API to use long-polling. It assumes:

- You have an existing REST API.

- You have clients repeatedly polling this API.

Long-polling is not the same as “plain” polling. With long-polling, the server delays the response to the client if there is no new data yet. This enables the server to respond instantly whenever the data does change. Aside from providing actual realtime updates, what’s great about long-polling is that technically it’s still RESTful, requiring hardly any changes to your API contract or client code.

Of course, long-polling may not be as efficient as streaming mechanisms like Server-Sent Events or WebSockets, but it’s inarguably more efficient than plain-polling. Let’s compare:

Mechanism Latency Load Plain-polling As high as the polling interval (e.g. 5 second interval means updates will be up to 5 seconds late) High Long-polling Zero Order of magnitude reduction Long-polling is a great way to dip your feet in the realtime waters without having to dramatically change your API contract and client code.

-

Serverless WebSockets

Serverless development is a hot topic lately. Development & operations of a web service can be greatly simplified by writing your application logic as short-lived functions, and relying on outside organizations for the development of all the other components in your stack (e.g. databases, gateways, container engines, etc). The term “serverless” is a bit funny because of course there are still servers in your stack, and they may even be your own servers, but the main idea is you no longer have to worry about your own long-running application code.

This all sounds great, but an issue arises: in this serverless world, how do you support long-lived connections (e.g. HTTP streaming/WebSocket connections) for realtime data push, without long-running application code? By delegating connection management to another component, of course! In this article we’ll talk about how to build a simple WebSocket service with Pushpin, using Microcule for running the backend worker function.

-

Streaming historical data

One of the most useful features of Pushpin is the ability to combine a request for historical data with a request to listen for updates. For example, an HTTP streaming request can respond immediately with some initial data before converting into a pubsub subscription. As of version 1.12.0, this ability is made even more powerful:

- Stream hold responses (

Grip-Hold: stream) from the origin server can now have a response body of unlimited size. This works by streaming the body from the origin server to the client before processing the GRIP instruction headers. Note that this only works forstreamholds. Forresponseholds, the response body is still limited to 100,000 bytes. - Responses from the origin server may contain a

nextlink using theGrip-Linkheader, to tell Pushpin to make a request to a specified URL after the current request to the origin finishes, and to leave the request with the client open while doing this. The response body of any such subsequent request is appended to the ongoing response to the client. This enables the server to reply with a large response to the client by serving a bunch of smaller chunks to Pushpin, and it also allows the server to defer the preparation of GRIP hold instructions until a later request in the session.

- Stream hold responses (

-

Pushpin and RethinkDB meetup video

A few months ago I presented at the RethinkDB meetup on the topic of building realtime APIs. Fortunately the talk was recorded and the video is below. Enjoy!

-

Realtime API management with Pushpin and Kong

Pushpin is the open source reverse proxy for the realtime web. One of the benefits of Pushpin functioning as a proxy is that it can be combined with an API management system, such as Mashape’s Kong. Kong is the open source management layer for APIs. To use Kong with Pushpin, simply chain the two together on the same network path.

Why would you want to use an API management system with Pushpin? Realtime web services have many of the same concerns as request/response web services, and it can be helpful to centrally manage those aspects.

-

Building a realtime API with RethinkDB

RethinkDB is a modern NoSQL database that makes it easy to build realtime web services. One of its standout features is called Changefeeds. Applications can query tables for ongoing changes, and RethinkDB will push any changes to applications as they happen. The Changefeeds feature is interesting for many reasons:

- You don’t need a separate message queue to wake up workers that operate on new data.

- Database writes made from anywhere will propagate out as changes. Use the RethinkDB dashboard to muck with data? Run a migration script? Listeners will hear about it.

- Filtering/squashing of change events within RethinkDB. In many cases it may be easier to filter events using ReQL than using a message queue and filtering workers.

This makes RethinkDB a compelling part of a realtime web service stack. In this article, we’ll describe how to use RethinkDB to implement a leaderboard API with realtime updates. Emphasis on API. Unlike other leaderboard examples you may have seen elsewhere, the focus here will be to create a clean API definition and use RethinkDB as part of the implementation. If you’re not sure what it means for an API to have realtime capabilities, check out this guide.

We’ll use the following components to build the leaderboard API:

- Database: RethinkDB, hosted on a Rackspace server.

- Web service: Django, hosted by Heroku.

- Realtime push to clients: Pushpin, hosted by Fanout Cloud.

Since the server app targets Heroku, we’ll be using environment variables for configuration and foreman for local testing.

Read on to see how it’s done. You can also look at the source.

-

Stateless WebSockets with Express and Pushpin

One of the most interesting features of the Pushpin proxy is its ability to gateway between WebSocket clients and plain HTTP backend servers. In this article, we’ll demonstrate how to build a WebSocket service using Express as the HTTP backend behind Pushpin.

-

Pushing to 100,000 API clients simultaneously

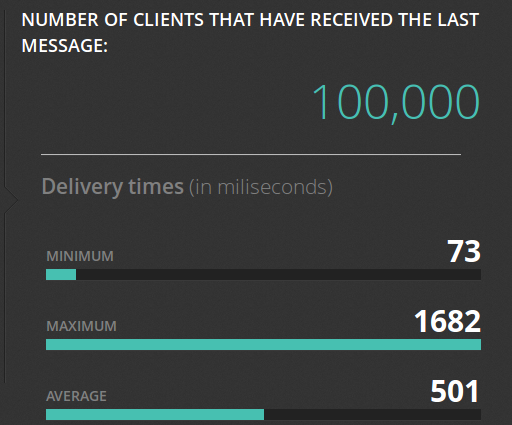

Earlier this year we announced the open source Pushpin project, a server component that makes it easy to scale out realtime HTTP and WebSocket APIs. Just what kind of scale are we talking about though? To demonstrate, we put together some code that pushes a truckload of data through a cluster of Pushpin instances. Here’s the output of its dashboard after a successful run:

Before getting into the details of how we did this, let’s first establish some goals:

- We want to scale an arbitrary realtime API. This API, from the perspective of a connecting client, shouldn’t need to be in any way specific to the components we are using to scale it.

- Ideally, we want to scale out the number of delivery servers but not the number of application servers. That is, we should be able to massively amplify the output of a modest realtime source.

- We want to push to all recipients simultaneously and we want the deliveries to complete in about 1 second. We’ll shoot for 100,000 recipients.

To be clear, sending data to 100K clients in the same instant is a huge level of traffic. Disqus recently posted that they serve 45K requests per second. If, using some very rough math, we say that a realtime push is about as heavy as half of a request, then our demonstration requires the same bandwidth as the entire Disqus network, if only for one second. This is in contrast to benchmarks that measure “connected” clients, such as the Tigase XMPP server’s 500K single-machine benchmark, where the clients participate conservatively over an extended period of time. Benchmarks like these are impressive in their own right, just be aware that they are a different kind of demonstration.

-

An HTTP reverse proxy for realtime

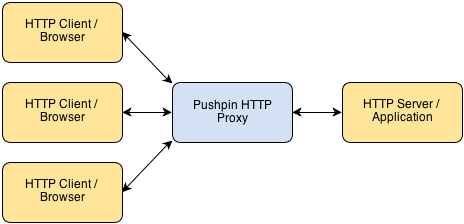

Pushpin makes it easy to create HTTP long-polling, HTTP streaming, and WebSocket services using any web stack as the backend. It’s compatible with any framework, whether Django, Rails, ASP, PHP, Node, etc. Pushpin works as a reverse proxy, sitting in front of your server application and managing all of the open client connections.

Communication between Pushpin and the backend server is done using conventional short-lived HTTP requests and responses. There is also a ZeroMQ interface for advanced users.

The approach is powerful for several reasons:

- The application logic can be written in the most natural way, using existing web frameworks.

- Scaling is easy and also natural. If your bottleneck is the number of recipients you can push realtime updates to, then add more Pushpin instances.

- It’s highly versatile. You define the HTTP/WebSocket exchanges between the client and server. This makes it ideal for building APIs.

• filed under

• filed under